Summary

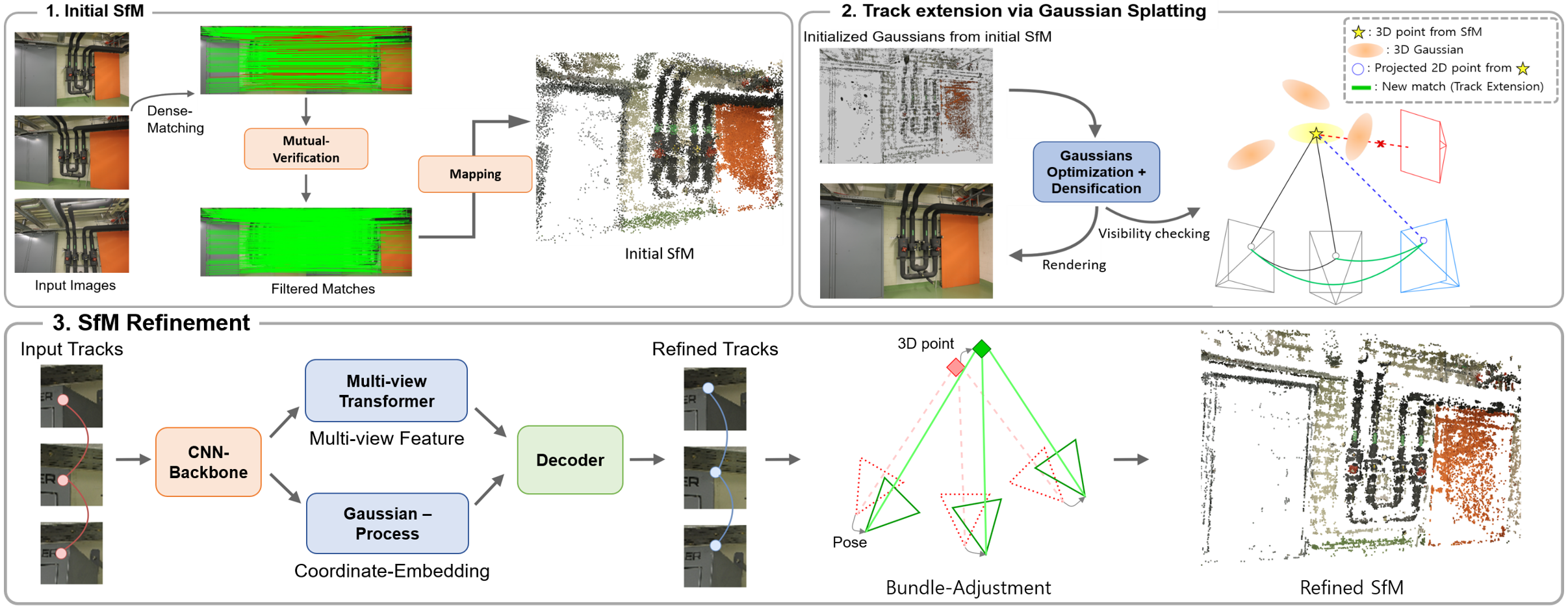

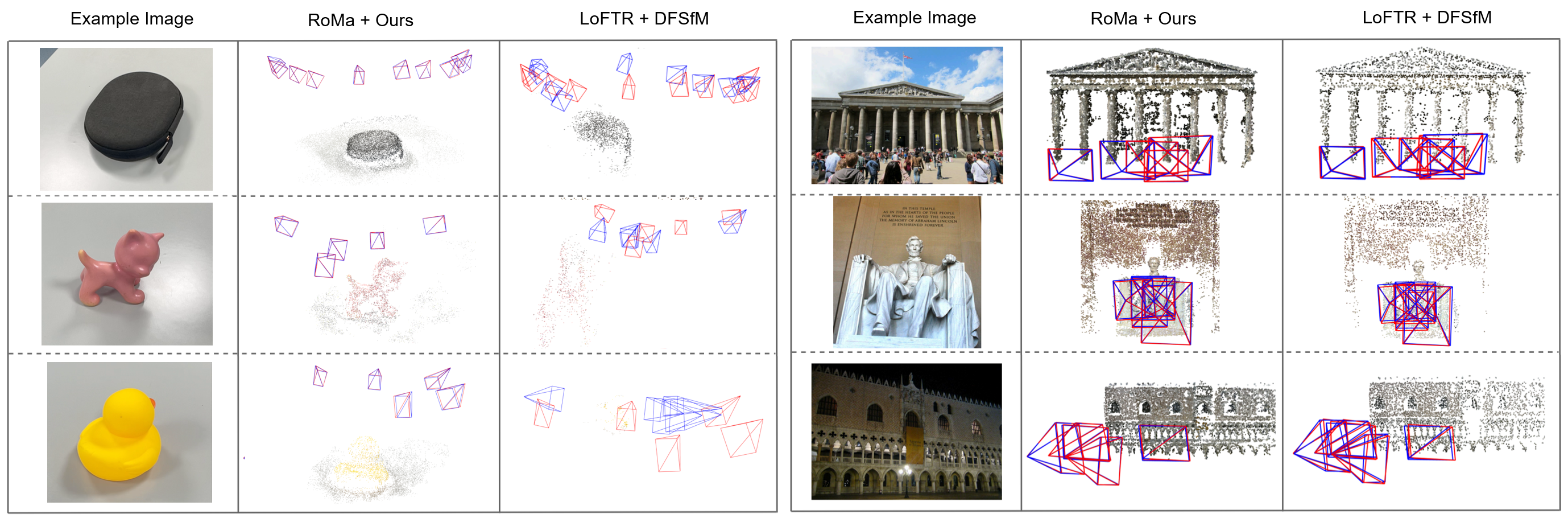

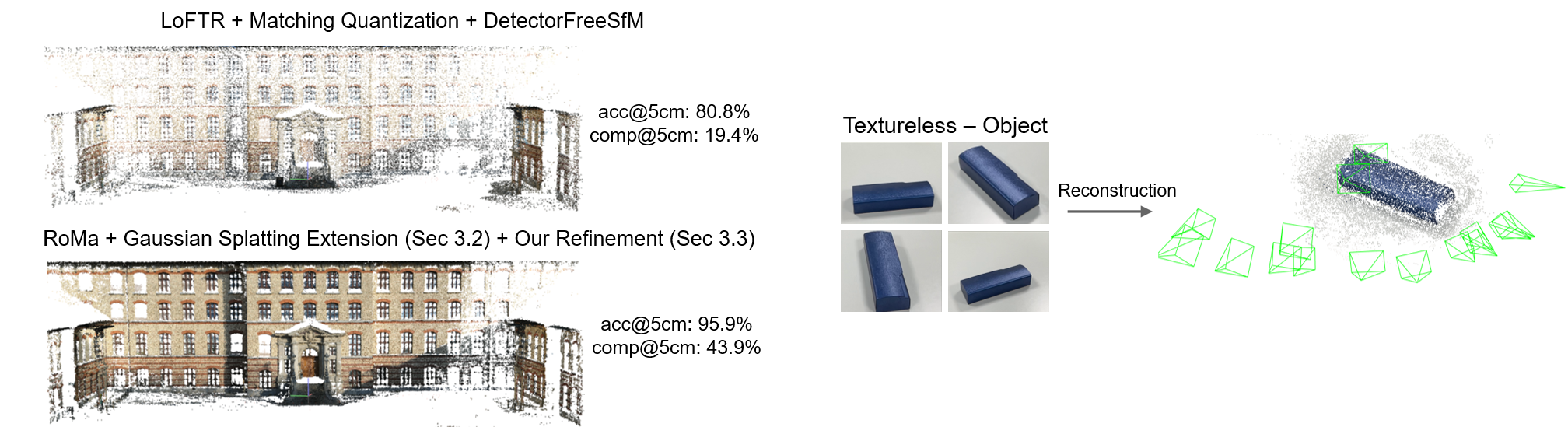

We present Dense-SfM, a novel SfM framework that integrates dense feature matching for accurate and dense 3D reconstruction from multi-view images. To resolve the track fragmentation problem inherent in pairwise dense matching, Dense-SfM leverages Gaussian Splatting to reason about 3D point visibility and extend short tracks to additional views — without sacrificing subpixel accuracy. The extended tracks are then refined by a novel multi-view kernelized matching module combining transformer and Gaussian Process architectures, followed by iterative bundle adjustment.